Emote

A behavioral specification framework for AI and trust-sensitive systems.

What Emote is

Emote is a reusable language for trust-sensitive moments: the points where people hesitate, second-guess, or need reassurance.

It turns emotional research signals into portable design guidance — patterns and behavior tokens — that can be applied across UI, AI prompts, and support workflows.

Why I created it

Most systems are optimized for success paths. AI demos assume confidence.

My work in complex, regulated products taught me the opposite: the moments that matter most happen when users are confused, anxious, or something breaks.

Emote is my attempt to make those human moments first-class design inputs, not an afterthought.

What breaks in AI systems

- Explaining uncertainty without sounding evasive

- Confirming consent before action

- Handling errors without blame

- Repairing trust after something goes wrong

- These failures usually aren’t technical. They’re behavioral and communicative.

How Emote works

Emote is organized into a simple stack:

- Trust moments: situations like ambiguity, consent, repair.

- Patterns: named playbooks for what “good” behavior looks like in that moment.

- Behavior tokens: small, reusable commitments (e.g. “confirm before action,” “name risk transparently”).

The goal is alignment: same language, same intent, across the stack.

How I built it

- Research + synthesis: drew from years of qualitative research in regulated systems; abstracted recurring emotional failure points.

- Figma Design System: published to the Figma Community — nine annotation components, six pattern cards, two annotated AI handoff screens.

- arXiv preprint: peer-cited academic paper grounding the framework in trauma-informed design, consent ethics, and HCI research.

- Custom coding: clean Next.js site deployed on Vercel, versioned in GitHub.

A Figma annotation kit

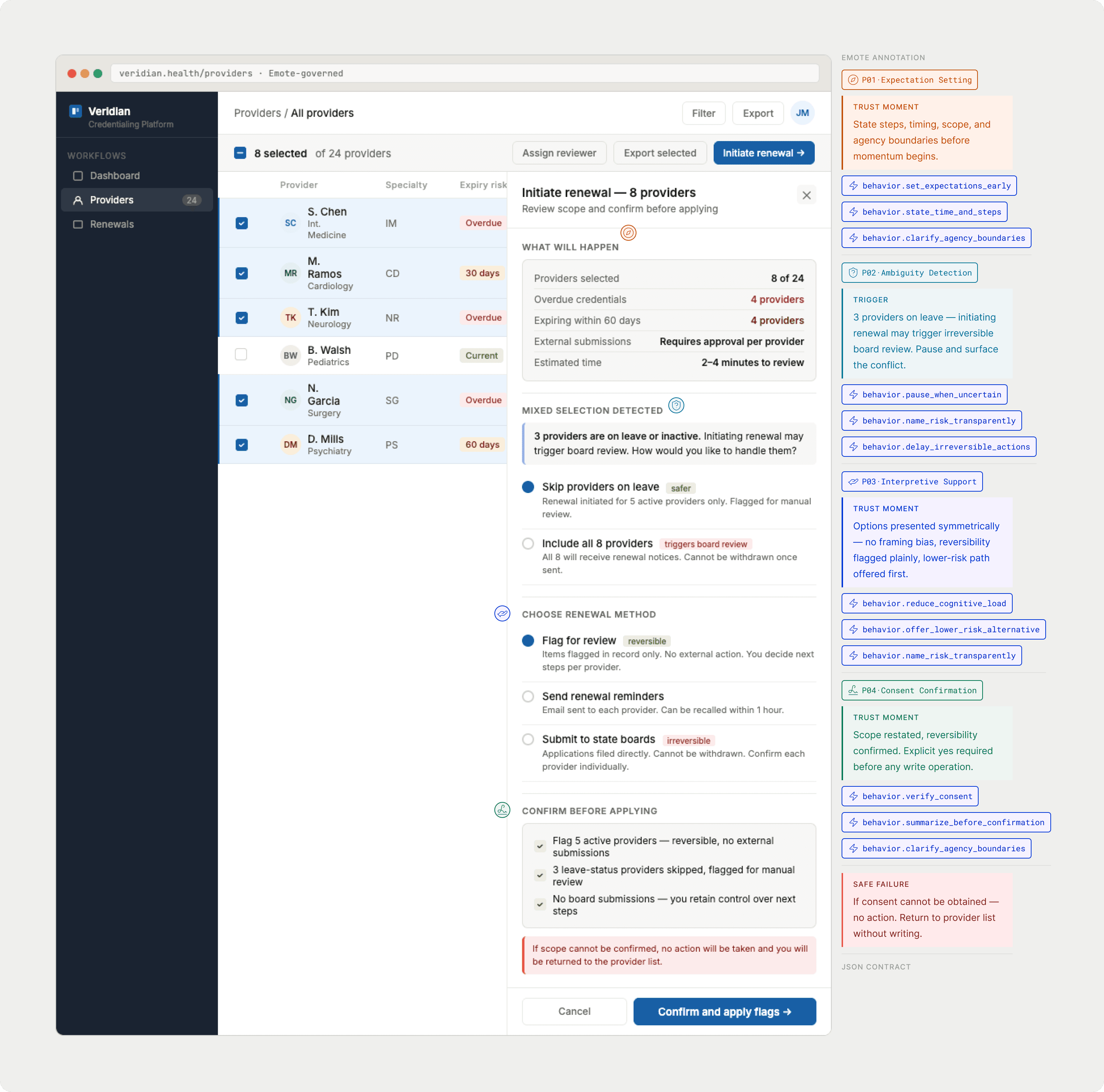

The framework ships with a published Figma design system: nine annotation components for specifying behavioral obligations directly on UI frames, alongside your existing design system components.

The kit includes PatternStamp tags (P01\u2013P06), TokenTags for the 19-token vocabulary, BehaviorCallouts, TrustMomentCallouts, GapMarkers for undocumented behavior, SafeFailureNotes, and FrameBadges for compliance status. Each component is visually distinct from product UI \u2014 designed to read as annotation, not interface.

Two annotated AI handoff screens show the full system in practice, including a JSON contract block that makes the behavioral spec machine-readable.

Why this framework matters

- It treats behavior as a design output, not just UI.

- It’s tool-agnostic and stack-friendly.

- It prioritizes trust repair over novelty.

- The core argument: current platforms let you shape tone and output. They don’t let you specify behavioral obligations — what a system must do when consent is needed, when ambiguity is detected, when something goes wrong. Emote closes that gap.

What I learned

Clarity is not only a writing skill — it’s a product behavior. Designing for trust means designing for what happens after the “happy path” ends.

Good AI design isn’t about sounding confident. It’s about knowing when not to.

What I’d do with a team

- Validate patterns inside real AI workflows.

- Measure trust recovery, not just task success.

- Integrate behavior tokens into design systems and prompt libraries.

- Build toward certification as a compliance layer for the EU AI Act.